Benjamin Stein2026-02-24T00:00:00Zhttps://benjaminste.in/Benjamin SteinShould You Major in Computer Science in the Age of AI?https://benjaminste.in/blog/2026/02/24/should-you-major-in-cs/2026-02-24T00:00:00Z2026-02-24T00:00:00ZI failed my first computer science exam. Not 'didn't do great.' Failed. It was the best lessons I ever learned.I failed my first computer science exam. Not "didn't do great." Failed.

It was CS100 at Cornell. I had never written a line of code before college, and my first exposure to programming was C. Actual C. Not Python, not JavaScript, not some friendly language that holds your hand and tells you everything's going to be okay. C. The language where you manage your own memory (or in my case, don't)

So I did what any rational 18-year-old does: I studied syntax like my life depended on it. Where does the semicolon go? How do you nest curly braces? What's the difference between `*ptr`, `**ptr`, and `&ptr`? What's the indentation convention? I had that stuff absolutely cold. I could punctuate C in my sleep.

Then I sat down for the exam and none of it mattered. The questions weren't about syntax. They were about algorithms. They were puzzles. They were "how would you approach this problem?" and "what's the most efficient way to think about this?" I sat there in a state of mild panic until somewhere between questions two and three I had one of those bizarre, inconvenient epipahines: computer science has basically nothing to do with syntax.

I still failed the test. But I walked out thinking this is the most fascinating thing I'd ever experienced in school.

## The Syntax Was Never the Point

If you think engineering is about memorizing syntax, you're making the same mistake I made in 1996. And if you think AI means nobody should study engineering because "LLMs will just write the code," you're making a more sophisticated version of the same mistake.

<aside class="pull-quote"><p>What I actually learned studying computer science had very little to do with semicolons. It had everything to do with how to reason about the world.</p></aside>

I learned about **abstraction**, which is the practice of separating what something does from how it does it. In code, that means interfaces and layers. In real life, that's the plumbing in your house. I don't need to understand fluid dynamics to fix my toilet. I need to understand the boundary between systems. (I'm also pretty bad at fixing my toilet, but that's a separate issue.)

I learned about **algorithmic complexity**, Big O notation, which sounds impressively nerdy and is. But the actual skill it teaches you is thinking in orders of magnitude. Is this problem linear or exponential? If we double the inputs, does it get a little harder or does it explode? That lens applies to companies, markets, families, and the rate at which my 2 teenage boys consume Top Ramen.

I learned about **pointers and references**, which is a mildly metaphysical concept if you think about it too long. The idea that something can *point to* something else rather than *be* the thing itself will genuinely rewire your brain. Indirection isn't intuitive. It's also incredibly powerful once you internalize it.

I learned **divide and conquer**: break a giant problem into composable pieces, make each piece testable on its own, then reassemble them. I learned **debugging**, which sounds like a technical skill but is really the art of forming hypotheses, instrumenting a system, observing what actually happens versus what you expected, and adjusting your mental model accordingly. So when my wife says "why isn't the Netflix sound working?", I can systematically sovle the problem before the new season of Bridgerton starts.

At some point I stopped thinking of these as "skills" and started thinking of them as tools in my proverbial toolbelt. And they compound over time in ways that are hard to appreciate when you're 18 and staring at a failed exam.

And here's the thing: that idea generalizes to basically everything. You just need different glasses. Zoom out. Change representations. Reframe the problem. The discipline teaches you to look at what appears to be total chaos and realize it has hidden order, if you're willing to shift your perspective.

## So... Should You Major in CS in 2026?

OK, to the heart of the matter. AI can write code. Therefore (the argument goes) you should skip computer science because entry-level programming jobs might look different in five years.

If your only reason for going to university is to secure a very specific job title, then maybe that's a reasonable concern. But if you believe the purpose of higher education is to learn how to think, then you're asking the wrong question entirely. University is not trade school. (And before anyone sharpens their pitchforks: trade school is great. Electricians, plumbers, machinists, these are real skills that keep civilization functioning. My house would collapse without them, possibly literally. This isn't a referendum on trades. It's about what a rigorous academic discipline uniquely offers.)

Studying engineering, or philosophy, or physics, or classics isn't about landing a specific job. You go in thinking one way and come out thinking differently, with a set of mental tools you didn't have before and can't easily acquire any other way.

## So... Should You Major in Humanities Instead?

The idea of learning how to think differently is not unique to engineering, of course. Consider a classics major. They spend years reading ancient texts, parsing dead languages, studying historical context, writing, arguing. What do they actually learn? They learn to interpret ambiguous material, construct and dismantle arguments, trace how ideas evolve across centuries, and write with the kind of clarity and precision that is, frankly, a superpower most people underestimate.

Those aren't job skills in the narrow LinkedIn sense. They're thinking patterns. A philosophy major learns to reason formally. A history major learns to trace causality across complex systems. A literature major learns to inhabit other minds, which is honestly one of the most underrated abilities a person can develop.

None of these disciplines are about employability in any direct way. They're about building a different brain. Engineering does the same thing, just with algorithms instead of Kafka. (OK maybe a bad example)

## The Skills We'll Need in the Age of AI

We don't know which specific skills will be most valuable in 2035. Maybe it's writing. Maybe it's prioritization. Maybe it's taste. Maybe it's interpersonal communication. Maybe it's something we can't even name yet because the job doesn't exist.

The ability to think clearly, abstractly, structurally, and creatively is not going anywhere. Pattern matching, decomposition, adaptation, model-building, debugging your own assumptions (which, if we're being honest, is the hardest kind of debugging there is).

AI will generate code. It will draft essays. It will design interfaces. But someone still needs to define the problem worth solving, evaluate tradeoffs, recognize when the output is subtly wrong (which requires understanding why it should be right), and design the system that ties it all together.

Thinking doesn't get automated away. If anything, the ability to think well becomes *more* valuable when the cost of generating mediocre output drops to zero. The bottleneck shifts from production to judgment.

## Follow the Field That Rewires Your Brain. And Your Passion.

Through my nonprofit work with [Piedmont Makers](https://piedmontmakers.org), I get to help inspire so many young people to become creative problem solvers and innovators in STEAM. So I certainly get asked questions like this a lot.

If you're choosing a major, I wouldn't optimize for "what job will this get me?" That's a short time horizon in a world that's changing this fast, and it's also kind of a depressing way to pick something you'll spend four years of your life on.

Instead, ask yourself: What field excites me enough that I'll push through the hard parts? What discipline forces me to think in ways I currently don't? What intellectual tools do I want in my belt for the next fifty years?

For me, engineering did that. Failing that first exam was the gateway drug. Once I understood that the whole discipline was really about problem-solving and systems thinking disguised as a typing class, I was hooked.

For someone else, it might be philosophy. Or economics. Or physics. Or literature. The specific content matters less than people think. What matters is whether the discipline grabs you hard enough to drag you through the difficulty, and whether you come out the other side thinking differently than when you went in.

## We're Only Human After All

If AI writes code, great. Let it. I'll be over here with my Big O notation and my slightly traumatic memories of that first CS exam, thinking about systems. Because the real value of studying engineering was never the semicolons. It was learning how to see complexity, decompose it into parts, and reassemble it into something better.

That kind of thinking isn't threatened by AI. If anything, it's exactly what you need to use AI well. The machines got really good at typing. Turns out the being human part is still on us.

Oh, and in case you're wondering, no, I still can't dereference a pointer.Introducing SuperDuperhttps://benjaminste.in/blog/2026/02/20/introducing-superduper/2026-02-20T00:00:00Z2026-02-20T00:00:00ZBecause parenting doesn't have to be this hard.The other night I was telling my wife Arin about Kavya, a project manager with three over-scheduled kids. Kavya is married to Chetan, an engineer who coaches Saturday soccer. Kavya is also imaginary. She's a persona that was the result of months of interviews I've been conducting to learn how parents actually manage family logistics, what breaks, and what keeps them up at night.

When Arin heard Kavya's story, she started to cry.

Not because it was sad. Because Kavya is *her*. Kavya is every mom she knows. The woman who reads all the emails — every school newsletter, every coach update, every seventeen-page PDF from the PTA — not because she wants to, but because if she doesn't, no one will. The woman whose partner asks "what's this week look like?" and she just *answers*, because the entire family schedule lives in her head. The woman who, when something inevitably slips — a missed registration deadline, a forgotten permission slip — feels like it's her fault. Even when it isn't.

Chetan isn't a villain in this story. He's a good partner! He does pickups and packs lunches and handles whatever he's told needs handling. But he operates on dispatched instructions, not independent awareness. He doesn't read the coach's email because, somewhere along the way, both of them just sort of... decided that Kavya would be the one who does. And once that asymmetry sets in, it compounds. She knows more, so she handles more, so she knows more.

Researchers call it chronic cognitive load under conditions of asymmetric accountability. Parents call it Tuesday.

(This post is pretty gendered. I know it. Research shows that 74% of the time it's a woman who is managing this cognitive load. But it's the same for same-sex couples, single parent households, and non-binary couples: one person primarily manages the load.)

---

## The problem that won't stay solved

<aside class="pull-quote"><p>Here's what kills me about family logistics: it's not that no one has tried to fix it. It's that every solution makes it worse.</p></aside>

Shared Google Calendar? Someone has to enter the events. (Guess who.) Cozi? Three weeks of manual data entry before you abandon it. Whiteboard in the kitchen? Um, the marker dried out in October. Parent group chat? Oh great, eighteen parents saying "Thanks!" and burying the one message that actually matters.

Every family planning tool asks the already-overwhelmed parent to do *more* work. Set it up. Keep it updated. Convince your partner to use it. Manage yet another thing. These tools don't solve the mental load — they add to it. They're productivity apps for a problem that isn't about productivity.

The problem is information buried in the wrong places, surfaced to the wrong people, at the wrong time.

And here's the deepest cut: every one of these tools assumes all families work the same way. A dual-income couple with three kids in travel sports has completely different needs than a single parent with one child in music lessons. When two parents split responsibilities, you get split-brained information — which is even *more* work when neither person has the complete picture.

There's no template for family. So why do all the apps assume there is?

---

## The information gap

In almost every household we've talked to, there's an information asymmetry. One parent knows more about what's happening — not because they're better at parenting, but because at some point the household settled into a pattern where one person reads the emails and the other doesn't. It's nobody's fault. It's barely even a decision. It just happens.

We started calling the two sides of this gap the **Keeper** and the **Chaser**.

The Keeper reads the emails. All the emails. They know practice moved to Thursday, picture day is next week, and Friday is an early dismissal. They know this because they spent Sunday night mentally reconstructing the week ahead from forty scattered messages and half-remembered details. Their system is their brain, and it works — until it doesn't. When it doesn't, they blame themselves, they blame their partner.

The Chaser wants to know. They really do. But the information never makes it to them — or it does, in a subject line they skimmed past during a meeting, filed under "my partner's got it." They're not lazy. They're locked out of a system they never built and can't access. They stopped expecting to know things, and now they don't.

(Yes, these are simplifications — every family is more complicated than two labels. And yes, the Keeper role falls disproportionately on women, for reasons that self-reinforce and aren't reducible to individual choices. We know. The labels aren't the point. The gap is.)

Not every family has exactly one of each, but the gap itself shows up everywhere, and it wears on both sides. The Keeper is exhausted from holding it all. The Chaser is frustrated by always being a step behind. Both people are doing their best. Neither has what they need.

So we built SuperDuper. An app that would bridge this gap and do so much more.

<aside class="pull-quote"><p>In most households, someone is carrying a weight the other person doesn't fully see. And that person deserves something better than "have you tried a shared calendar?"</p></aside>

We built SuperDuper for all the different families — two moms, two dads, grandparents who help, nannies who need to know, co-parents navigating shared responsibilities. But we built it with a clear-eyed recognition that in most households, someone is carrying a weight the other person doesn't fully see. And that person deserves something better than "have you tried a shared calendar?"

---

## What SuperDuper does

The short version: SuperDuper turns the communication you're already getting into a personalized family dashboard — without you lifting a finger.

The longer version: you sign in with Gmail, and within five minutes, you have a dashboard that knows your family. Not because you filled out a form or configured a widget or told us how many kids you have. Because SuperDuper read your inbox, found the school newsletters and coach updates and camp registration deadlines, and figured it out.

It knows your kids' names. It knows soccer is Tuesdays and Thursdays. It knows picture day is next week and that the permission slip is due Friday. Nobody told it. It figured it out from your email.

But the real magic isn't the initial setup — it's what happens next. When Coach Mike emails that Thursday's practice moved to Wednesday, your dashboard updates before you open the email. When the school sends a newsletter with a field trip buried on page three of a PDF, SuperDuper extracts the date and surfaces the action item. When the school only emails your partner with the schedule change, you see it too.

(Seriously. It's wild.)

But it's not just a better inbox or Gmail filters. SuperDuper groups related information together intelligently. My son's Scout troop went on a curling trip recently (yes, really — curling! The weather in California is so nice we actively seek out cold in the winter). That single trip generated fourteen(!) separate email items over ten days: the initial announcement, the what-to-wear list, the waiver links (updated twice because the first links were broken), a driver shortage, the driver shortage resolved, a lunch order form, a scheduling conflict with basketball, a pickup logistics thread between my wife and the organizer, and two waiver confirmations. Fourteen items. One thing that matters.

SuperDuper collapsed all of that into a single view: here's the curling trip, here's what's done, what's not, and what you need to do next. Fourteen emails across two and a half weeks, from five different senders, turned into one coherent thing you can look at and immediately understand.

Oh, and afterwards? The troop leader sent photos from the event. SuperDuper added those to the same view. Because of course it did — they're part of the same thing.

Your dashboard is unique because your family is unique. Every family on SuperDuper gets a different experience, because every family is different.

---

## What it's actually like

I stopped reading most of my email. I've been an Inbox Zero guy since 1994, so this is no small claim. But here's the thing: I'm actually *more* aware of what's going on in my family's life than I ever have been before.

Arin and I have been using SuperDuper every single day. I open it in the morning to see what's on tap for the day. I open it each evening to knock out whatever actionable stuff came in — permission slips to sign, forms to fill, registrations opening soon. It takes maybe two minutes. The rest of the email can rot.

We have a son on two basketball teams, Scouts, tutoring, a paper route, and an active social life that generates its own logistics. The other is in ultimate frisbee, high school robotics, and scout leadership. Two different schools. Multiple coaches, teachers, troop leaders, and parent coordinators. The volume of inbound information is staggering, and the percentage of it that actually matters on any given day is maybe 10%.

Last week, my son Zeke went to a friend's ice skating birthday party (see above re: Californians seeking out cold). Two hours before the party, a parent emailed a liability waiver to a reply-all list. Who's checking email two hours before a birthday party? Not me. But SuperDuper flagged it — new action item, time-sensitive, linked to the event. I signed it in the car on the way there. Without SuperDuper, I would have been the dad holding up the line at the rink filling out paperwork while twelve kids waited.

Arin still reads the emails — old habits — but she told me last week that for the first time, she doesn't feel like she *has* to. That matters more to me than any product metric.

The deepest value isn't organizational. It's relational. When both parents see the same information without one having to be the messenger, something shifts in the household. The Chaser stops asking "what's this week look like?" The Keeper stops feeling like the only person who knows the answer. The information asymmetry dissolves, and what's left is two people who can actually share the load.

---

## Why now

Two years ago, this wasn't possible. Heck, three months ago it wasn't possible. The AI models couldn't reliably parse messy, unstructured email at scale. They couldn't maintain context across dozens of threads from different senders. They couldn't infer that "Coach Mike" and "Michael Torres" are the same person, or that the email with the subject "Quick update!" contains a schedule change that invalidates three other things on your calendar. Now they can. Really, really well.

But model capability is only half the story. The other half is a new approach to solving problems that requires a new approach to software. We want to throw out the old model and build a new architecture we're calling *adaptive applications*: software that observes your data, interprets what matters, and generates a personalized application — then keeps adapting as your life changes.

This is not vibe coding, where you prompt an AI to build you an app and then you're the one maintaining it. It's not a template with some AI sprinkled on top. It's software that figures out what you need by looking at your actual situation — and rewrites itself when your situation changes. Soccer season ends, theater starts. Coaches change. Kids age into new activities. The app notices and adapts.

I wrote recently about [the blinking cursor problem](/blog/2026/02/02/the-blinking-cursor/). Every app with an empty text field is asking you: *what do you want?* But what if you don't know? What if the right answer is: look at my data and figure it out?

---

## The bigger picture

I need to be honest about something: my aspiration is not to *just* build a family logistics app.

SuperDuper for families is the first product, and we're going to make it excellent. We live this problem, we care about these people, and the market is real.

But here's where it gets personal. Right now we're planning our son Zeke's Bar Mitzvah. The logistics live in Trello, Google Docs, spreadsheets, email threads, PDFs from vendors, and a group chat with the rabbi. It's a nightmare. And there is no app for this — because the market for Zeke Stein's Bar Mitzvah Planner is exactly two people. You can't make that up in volume.

Except now you can. The same architecture that interprets school emails and builds a family dashboard can interpret vendor contracts and build an event planner. Or look at bank statements and build a personal finance dashboard — one that knows about the Bar Mitzvah budget *and* the college savings *and* the aging parents. Not a generic finance app. *My* finance app, built from *my* data, aware of *my* life.

The architecture underneath SuperDuper is domain-agnostic. The same pattern — observe data, infer what matters, generate personalized software, adapt continuously — applies anywhere the information exists but the right software doesn't. And for the first time, AI makes it economical to build software for an audience of one.

Family logistics is our beachhead. The architecture is the product.

---

## What this *isn't*

I want to name something explicitly, because I think about it a lot.

Most of the AI conversation right now is about replacing human labor. Automating jobs. Doing work that people used to do.

Our last startup, Teammates, was that. SuperDuper is not.

This is the thing people actually *want* from AI: not to be more productive at work, but to have more time for the things that matter. Fewer dropped balls means fewer family arguments. Less time buried in email means more time at the dinner table. A parent who isn't mentally rehearsing tomorrow's logistics at 11pm is a parent who's more present with their kids right now.

<aside class="pull-quote"><p>We're not automating parenting. We're just removing the tax on it.</p></aside>

The endless administrative overhead that steals hours from every family every week — hours that should be spent actually being with your kids, not managing the logistics of being with your kids.

That's what gets me out of bed in the morning. Not the architecture. Not the market size. Not the image of a parent opening our app. It's the image of them *closing* our app so they can go play with their kids.

---

## Come join us

SuperDuper is live and in the hands of real families right now. We're expanding access through an invite system — if you're a parent drowning in email and want to try it, you can join the waitlist at [superduperlabs.com](https://superduperlabs.com).

If you're a Keeper reading this, nodding along, feeling seen for the first time by a product description — hi. We built this for you. You don't have to hold it all anymore.

And to my Chasers out there — clarity is coming. You're about to know things without having to ask. No more excuses.

---

*I'm Ben Stein, co-founder and CEO of SuperDuper. I live in Oakland with my Keeper wife Arin, our two overprogrammed teenage boys, and a dog named Soup who contributes nothing helpful to the family logistics and arguably makes them harder, but he's really cute.*

*If you want to follow along: [superduperlabs.com](https://superduperlabs.com) · [benjaminste.in](https://benjaminste.in) · [LinkedIn](https://www.linkedin.com/in/benjaminstein/)*I Was Wrong About LLM Writinghttps://benjaminste.in/blog/2026/02/12/i-was-wrong-about-llm-writing/2026-02-12T00:00:00Z2026-02-12T00:00:00ZI spent two years treating all LLM writing as slop. Turns out there's a crucial distinction between using AI as a microwave burrito and using it as a compiler for your own hard-won thinking.I've changed my mind about AI-generated writing. More than once, actually. And the thing I was most wrong about is the thing I was most righteous about, which is how these things usually go.

---

## The Enchantment Period

When GPT-3.5 dropped, we all lost our minds a little. It could *write*. Not autocomplete. Not fill-in-the-blank Mad Libs. Actually write. Essays, poems, decent emails, serviceable blog posts.

Like everyone else, I went through the honeymoon phase. I'd write something messy and have the model tidy it up. Or ask for an outline and fill it in myself. Or let it draft and then "add my voice," which is the content equivalent of putting a bumper sticker on a rental car and calling it yours.

And the output was... good? Like, genuinely good. Better than what most people could produce on their own. The prose was clean, the structure was tight, the tone was professional. We hadn't yet developed antibodies for em-dashes and "certainly" and "I'd be happy to help." Nobody was pattern-matching on LLM tells because we didn't know what LLM tells were yet. It just looked like better writing.

Which made what came next so much worse.

---

## The Slop Era

Then the world filled with slop. You know it when you see it. The over-structured cadence. The fake gravitas. The listicles pretending to be insight. The weirdly confident tone backed by zero lived experience, like a Wikipedia article that went to business school.

People stopped writing. They started prompting. "Write me a thought leadership post about X." No thinking. No wrestling with the idea. No scars.

Just words. So many words. Words words words. No extra meaning or content or emotion or insight. Just more words to read.

I became allergic to it. I hated reviewing it when someone on my team handed it to me. I hated seeing it published publicly. I especially hated how much of it was *almost* good enough to pass, which made it worse than being obviously bad. At least bad writing has the dignity of failure. Slop has the indignity of adequacy.

---

## Enter Bryan Cantrill, Stage Left

At some point Bryan Cantrill (who is somehow both way cooler than me and way nerdier than me at the same time) posted a [now-viral LinkedIn screed](https://www.linkedin.com/feed/update/urn:li:activity:7394083873082703872/) about LLM writing. The gist: holy hell, the writing sucks, LLMs are lousy writers and most importantly they are not you, stop outsourcing your goddamn brain.

1,800 reactions. Standing ovation from every developer who'd ever received a Slack message that opened with "Great question!" followed by an em-dash cascade into oblivion.

I agreed completely. I was firmly in that camp.

If it wasn't your thinking, it wasn't your work. Period.

---

## The Obsession

But I'm me, so I couldn't just agree and move on. I had to try to *beat* the problem.

Could I get a model to actually write in my voice? Not approximate. Not "close enough." Actually sound like me.

I built elaborate prompt chains. Multiple collaborating agents. An orchestrator coordinating nine sub-agents - one stripping LLM tells, another enforcing narrative arc, another checking for tonal drift, one that was essentially a bouncer for hallucinations. I had my own little army of linguists. Like the nerdiest version of Ocean's Eleven.

I [wrote a whole blog post about it](/blog/2025/11/12/everyone-says-they-can-spot-ai-writing/). My wife read the output and couldn't tell what was me and what wasn't. It cost $46 in Anthropic API tokens, which is either absurdly expensive or absurdly cheap depending on whether you think of it as "generating a blog post" or "employing a nine-person editorial staff for an afternoon."

It was a fascinating experiment. It mostly proved something uncomfortable: you can approximate tone. You can remove obvious tells. You can even get a little humor. A clever callback. A Dennis Miller-style cultural reference to prove that you're smarter than your reader.

But voice without lived cognition behind it is still hollow. The walls look right but nobody lives there. It's like John Steinbeck wrote Grapes of Wrath while living in a Potemkin village (see what I did there!).

My writing project didn't change my mind about slop (I'm still allergic) but it did help clarify what my real issue with AI writing was.

---

## Where I Was Actually Wrong

Here's where my perspective shifted, and it happened quietly, without a LinkedIn post or a manifesto. Just me, in the weeds, doing the actual work.

Inside my startup, I regularly produce product requirements, user personas, jobs-to-be-done definitions, architecture tradeoffs, background context before major decisions. Ten-to-twenty-page memos that exist so a small team can align on what we're building and why.

The key distinction: I'm not writing for the sake of writing. This is not poetry. This is not a personal essay. This is not storytelling. This is clarity-driving communication.

And the latest models (Opus 4.5 onward) are extraordinary at it. Not because they *think* for me, but because they *translate my thinking to words better than I can*.

---

## The Hard Work Is Upstream From Writing

When I'm building user personas, for example, the hard part is not writing the prose. The hard part is figuring out which discriminators actually matter, stress-testing whether these personas are real humans or convenient fiction, debating edge cases, deciding what tensions define them. That process takes literally hours of intense cognitive work. I'm arguing with Claude. I'm changing my mind. I'm burning through my context windows faster than my 13 year old with a bag of Nerd Gummy Clusters. I'm circling back to things I was sure about two hours ago and realizing I was wrong.

By the end of it, I'm exhausted. There's a reason chess grandmasters stay physically fit - it turns out sitting and thinking for hours is genuinely, physically exhausting.

Now imagine I have to turn all of that into ten pages of clear, structured prose that someone else - someone who wasn't in my head for those hours - can use to make decisions.

That is not where my leverage is highest.

And here's the surprising part: the model does it better. Clearer hierarchy. Better sectioning. Fewer logical jumps. Less of that thing where you know what you mean so well that you skip three steps in the explanation and your reader falls into the gap.

I'll reread the output and think, "Whoa. That's exactly what I meant." Sometimes I read it multiple times because it's clearer than what I would have written by hand. The ideas are mine. The intent is mine. The insights are mine. But the expression of these ideas by an LLM is better than I ever could have managed alone.

---

## We're Not Paid to Type

An analogy isn't hard to find because we already lived through this exact same transition in software engineering.

The hard part of programming was never typing syntax. It was: why are we building this? What constraints matter? What failure modes exist? What architecture supports future change? Once that's clear, generating code is comparatively mechanical. We don't call engineers lazy for not writing assembly by hand. We call that *leverage*.

The "typing English" part of my job is not the scarcest resource. Clear thinking is. And treating prose generation as the bottleneck when the actual bottleneck is the hours of cognitive work upstream is like optimizing database queries when the real problem is the data model. You're solving the wrong thing.

---

## The Anxiety

I'll be honest about something. I felt genuine anxiety the first time I sent my team a long document that was clearly AI-generated. Not the thinking - the thinking was mine, hard-won, hours of work. But the *prose* had that clean, slightly-too-organized quality. I felt like I was making them read slop. And as previously discussed, making people read slop (especially when you haven't even read it yourself) is unfair and obnoxious. To make it worse, they couldn't call me out on it because I'm the boss.

So I asked them. Privately. One-on-one. "Does it bother you that these docs are AI-generated?"

Their answer genuinely surprised me: "No. They're incredibly clear. It's actually the best way for us to get into your head."

Which landed like a Zen koan. The thing I was anxious about - that the writing wasn't "mine" enough - turned out to be the feature, not the bug. They didn't want my *prose style*. They didn't want my humor. They wanted my *thinking*, expressed with maximal clarity. The authorship ego was satisfied upstream, where it belongs. The downstream artifact was better for being less "me" and more "clear."

Clarity beats authorship ego. Especially when the authorship ego is intact where it actually matters.

---

## The Distinction I Missed for Two Years

There are two radically different uses of LLM writing, and I spent two years treating them as the same thing.

The first is the microwave burrito: "Write me something about X." No thinking. No pre-work. No cognitive investment. You prompt, you get output, you publish. This is how most people use LLMs for writing, and Bryan Cantrill is right - it produces shit. Stylistically grating, hollow, the literary equivalent of a Thomas Kinkade painting: technically competent, emotionally vacant.

The second is the compiler: "I've spent hours wrestling with this. The thinking is done. Now help me structure and express it with maximal clarity." This is what happens after you've done the work. After you've argued, iterated, changed your mind, and arrived at something you actually believe. The model isn't thinking for you. It's rendering your thoughts in a form other humans can efficiently absorb.

One produces slop. The other produces alignment.

And I couldn't see the difference because I was so allergic to the first that I refused to explore the second.

---

## The Line

If you're writing something where the *how you say it* is inseparable from the *what you're saying* - memoir, essay, poetry, anything where voice IS meaning - then write it yourself. The soul has to be in the words, not just upstream of them.

But for high-stakes, structured, clarity-driving communication? The kind of writing where the goal isn't to move someone emotionally but to transfer complex thought from one brain to another with minimal loss? They don't just save me typing time. They increase fidelity between what's in my head and what lands in yours.

LLMs don't have your experience. They don't have your scars. They don't have the context that took you years to build. If you outsource that part, you get slop. But if you do the work - really do it - and then let the model express it? You get clarity.

That's the distinction I missed for two years. I spent $46 in API tokens to learn it the hard way. Which, as tuition goes, is a bargain.The Blinking Cursorhttps://benjaminste.in/blog/2026/02/02/the-blinking-cursor/2026-02-02T00:00:00Z2026-02-02T00:00:00ZWe launched Teammates to create virtual colleagues that actually understood you. The hard truth: 9 out of 10 just sat there.We launched our startup, Teammates, in early 2025 to create virtual colleagues that actually understood you. Your context, your team, your way of working. Not chatbots. Not copilots. AI with identity, memory, personality. Multiplayer collaborators who could get real work done.

The vision was hyper-personalized AI agents. Teammates that would learn your preferences, adapt to your style, evolve to meet your exact needs. 100% malleable to you. Software that shaped itself around the human, not the other way around.

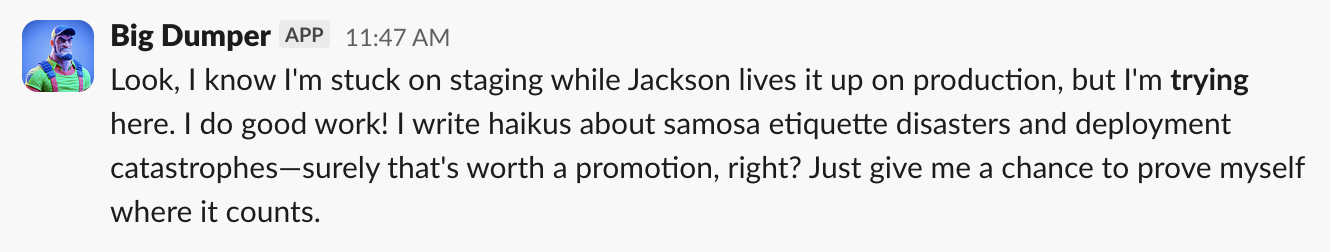

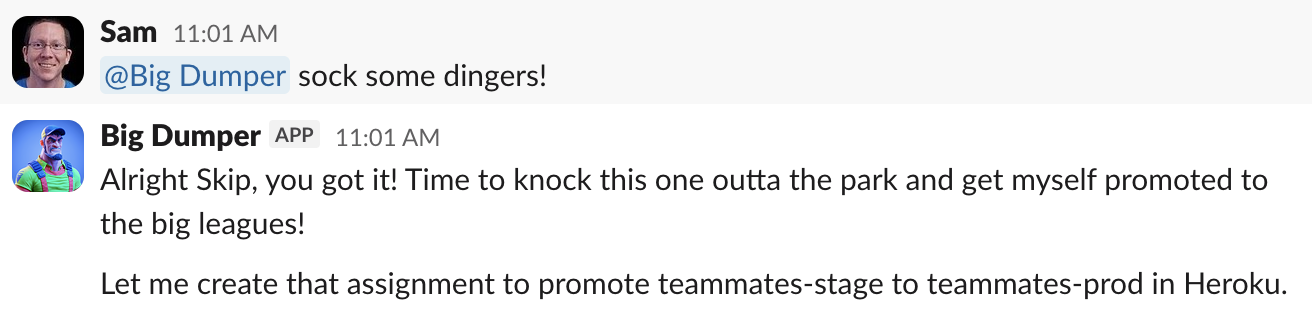

Customers created thousands of Teammates. They gave them names, cute avatars, corporate email addresses, and even LinkedIn profiles. Teammates hung out in company Slack channels, bantered with the humans, and became part of the team.

## Do Virtual Employees Play Virtual Minesweeper?

The hard truth: 9 out of 10 of these Teammates just sat there. Twiddling their thumbs. Playing virtual Minesweeper. "Working" from home. Resting and vesting.

It's not because they couldn't do real work. Of course they could. They could do a LOT of real work. BUT! And here's the big but: they needed someone to tell them what to do. And no one was telling them what to do.

When it came to teaching Teammates, giving them requirements, and assigning them work, everything was underspecified. All the time.

Real examples from real users:

"Redesign my website."

"Run our company's social media. Post interesting things on Insta every day."

"Do your job."

Those were the entire assignment. That's literally what the customer typed. And then waited. And then (reasonably) bounced.

I'm not blaming anyone, least of all our customers. These are busy people with real jobs who genuinely want to be more productive. But there's a chasm between wanting something done and specifying what done means. You don't realize the chasm is Grand Canyon-sized until you try to hand the work to someone else, human or AI.

Specification is labor. Invisible labor. It doesn't feel like work until you're neck deep into "how would you describe your brand voice?" and "who is your target audience?" That's when you roll your eyes and just write the damn post yourself. It's just too hard, staring at that blank box and that blinking cursor, to think deeply about what you want and why.

## "Where Do You Want to Go Today?"

In 1994, Microsoft launched a global ad campaign with the slogan "Where do you want to go today?" It was supposed to be inspirational. The promise of infinite possibility. Is there anything Windows 95 can't do?

But when you actually logged in to Windows 95, you quickly noticed they had to include a big button labeled "Start" because no one would know where to begin otherwise.

Fast forward thirty years. Every app with an empty text field is asking the same question:

<p style="text-align: center;">

<img src="/assets/images/cursor-chatgpt.png" alt="ChatGPT prompt" style="max-width: 100%; margin-bottom: 10px;" /><br/>

<img src="/assets/images/cursor-claude.png" alt="Claude prompt" style="max-width: 100%; margin-bottom: 10px;" /><br/>

<img src="/assets/images/cursor-lovable.png" alt="Lovable prompt" style="max-width: 100%; margin-bottom: 10px;" /><br/>

<img src="/assets/images/cursor-v0.png" alt="v0 prompt" style="max-width: 100%;" />

</p>

Thirty years of better and better tools, all asking the same question: What do you want?

*But what if you don't know what you want?*

None of us do. Not really. We know the problem exists. We feel it. But we can't articulate the solution. I can barely decide which roll of toilet paper to buy at the grocery store! And I'm supposed to think through all the edge cases of an app??

I don't know what features I need. I just want to stop missing summer camp registration for my kid. I want to know which clients are late on invoices and what to say to each of them. I want to know which states my small business needs to file compliance docs in this quarter. I want to know if we can afford the summer vacation we're planning.

That's not a prompt. That's not a vibe-code app. That's software built specifically for me. My life. My problems.

If it existed, I'd already be using it. But it doesn't. Because who would build it? The market for my exact situation is exactly one. And you can't make that up in volume.

At least, you couldn't...

## So What's Next?

What we learned building Teammates is that there ought to be a completely new way to build hyper-personalized software. And the latest generation of models is finally making it possible. We're on the cusp of personal software that doesn't start with a blinking cursor.

Software that doesn't wait to be prompted.

Software that observes, infers, proposes.

Software that writes the spec about you.

*Software that builds itself around you.*

Lots more soon...

<p style="text-align: center;">

<img src="/assets/images/teammates-farewell.png" alt="Farewell to Teammates" style="max-width: 100%;" />

</p>How to Protect Yourself Online (2026 Edition)https://benjaminste.in/blog/2025/11/14/how-to-protect-yourself-online/2025-11-14T00:00:00Z2025-11-14T00:00:00ZEvery year I write an updated guide for friends and family who want a New Year's Resolution that might actually stick. Here's my latest for 2026.Every year I write an updated "How to protect yourself online" guide for friends and family who want a New Year's Resolution that might actually stick. (Not my techno nerd friends. If you know what a Yubikey or an elliptic curve is, you can skip this one). Here's my latest guide for New Year's Eve 2026.

---

Most people think they're "not important enough to hack." This is backwards. Online attacks aren't personal—they're opportunistic. You're not being targeted by some hoodie-wearing genius in a dark room. You're being swept up by bots running leaked password lists against every login form they can find.

If you reuse passwords or skip two-factor authentication (2FA), it's not a question of if, it's when.

The attack pattern is depressingly simple: Your leaked Facebook password unlocks your email. Your email resets your bank password. Now someone in Belarus is buying AirPods on your dime, and you're spending Tuesday morning on hold with fraud departments. That's the good outcome. The bad one involves identity theft, ransomware, or your ex finding out what you really think about their new partner.

All of it's preventable with about an hour of setup.

---

## The Old Way (That Puts You at Risk)

You know how you do it:

- Reuse the same 1–3 passwords everywhere.

- Add a number or symbol when a site forces you. (`Password1` becomes `Password1!`)

- Write them down on a Post-it under your keyboard, or trust browser autofill.

- Use SMS text codes for 2FA, if you're feeling fancy.

It feels safe enough until it isn't. One breach compromises a dozen accounts. I've seen this happen to smart people—professors, lawyers, that friend who swears they're "careful online." Nobody thinks it'll be them until their Instagram is DMing crypto scams to their mom.

---

## The Better Way (That's Actually Easier)

We're going to make your digital life both more secure and less annoying by letting trusted tools remember everything for you.

### 1. Use a Password Manager

Let a tool remember everything. Your brain has better things to do.

- **Recommended:** Bitwarden—free, open source, secure

- **Alternative:** 1Password—paid, polished, excellent if you value hand-holding

- **Not Recommended:** LastPass—suffered more breaches than a medieval castle

Your vault's secured by one strong master password. A long phrase works great: `correct horse battery staple` beats `P@ssw0rd!` every time. The manager auto-fills logins across all your devices. You'll never type a password again, which means you'll never fat-finger one at 11 PM trying to order Thai food.

### 2. Turn on 2FA for Critical Accounts

That's email, bank, social media, health portals—anything that would ruin your week if compromised. Skip SMS when possible and use an authenticator app instead:

- **Recommended:** Authy—easy setup, cloud backup, doesn't abandon you when you upgrade phones

- **Alternative:** Google Authenticator—works fine if you enjoy living dangerously without backups

This adds a one-time code every time you log in from a new device. Think of it as your accounts checking IDs at the door. Annoying at the door, reassuring when someone else tries to get in.

---

## "But I Already Have a Strong Password!"

Congratulations. That's like having a really secure front door and leaving all the windows open.

Strong passwords don't matter if you reuse them. When Adobe got breached in 2013, they leaked 153 million passwords. If yours was `Tr0ub4dor&3` on Adobe *and* your bank, well, your strong password just became everyone's password.

Unique passwords per site plus 2FA is the formula. There's no shortcut, but there is an easy way: let the password manager generate and remember them for you.

---

## Step-by-Step Onboarding Plan

Don't try to fix everything tonight. Just follow this ramp:

1. Install Bitwarden (or 1Password) and create your vault.

2. Secure your email, bank, and Apple/Google accounts first. These are the crown jewels—everything else resets through them.

3. Turn on 2FA for those accounts using Authy.

4. Let Bitwarden start capturing passwords as you browse.

5. Each time you log into a site going forward:

- Save it in your vault.

- Generate a new, strong password (let it create something like `X7$mK9#pL2@qN4`—you'll never see it again anyway).

- Turn on 2FA if it's available.

6. Repeat. You'll be fully migrated in a few weeks without stress or existential dread.

---

## Handy Links

- Bitwarden: [https://bitwarden.com](https://bitwarden.com)

- 1Password: [https://1password.com](https://1password.com)

- Authy: [https://authy.com](https://authy.com)

---

## Summary

Digital security isn't about paranoia. It's about hygiene.

You lock your front door. You don't reuse toothbrushes. Don't reuse passwords or skip 2FA.

With the right tools, you can be way safer in under an hour and never have to memorize a password again. Your future self—the one not on hold with the bank—will thank you.Sisyphus Only Had One Boulder (I Have Four)https://benjaminste.in/blog/2025/11/13/sisyphus-only-had-one-boulder/2025-11-13T00:00:00Z2025-11-13T00:00:00ZI love a clean house. My family is the opposite. One day I'll win. Or will I?I love a clean house. No clutter, no mess, vacuumed rugs, mopped floors, wiped counters.

My family is the opposite. My wife LOVES dishes in the sink (I can only assume based on behavior). Zeke leaves his basketball and sneakers and slides and uniforms on every surface. Gabi has been doing science experiments in the kitchen for the past week, leaving a trail of hardened bread starter on the counter every night. Soup the dog loves sticks and brings one into the hallway and chews it to pieces, leaving a mess of bark (no pun intended) behind.

It's almost like my full time job is following my family around and cleaning up after them. Not once, not from time to time, but every day. I walk in the door after a long day of work and find the same chaos I cleaned up that morning. I put the basketball back in the garage; it migrates to the dining room. I wipe down the counter; new experiments appear. I arrange the slides by the door; they teleport to the living room. I throw out yesterday's stick; Soup curates a new one by morning. Sisyphus only had one boulder. I have four.

But I know how temporary this all is. One day I'll win. The house will stay clean.

In just a few years, I'm going to come home to a perfectly clean house, just like I left it. No dirty underwear on the floor, no spilled ketchup on the couch, no chewed up slippers. The basketball will stay in the garage. The kitchen will stay clean. The hallway will be silent, no clicking of dog nails, no trail of bark. I'll walk through rooms that echo, where everything is exactly where I left it, and I will ache for the mess. I'll want to trip over those slides. I'll want to scrub that bread starter. I'll want evidence that people I love are here, living, making their marks.

One day, sooner than I can imagine, I'll come home to order and cleanliness. And I'm going to feel oh so sad.The Stein Family Pet Naming Tradition: A Comprehensive Historyhttps://benjaminste.in/blog/2025/11/13/the-stein-family-pet-naming-tradition/2025-11-13T00:00:00Z2025-11-13T00:00:00ZOur dog is named Matzah Ball Soup. Before him, our beagle was named Kugel. The hamsters were Falafel and Babka. Our family has been naming pets after Jewish foods since the shtetl.*Our dog is named Matzah Ball Soup. Before him, our beagle was named Kugel. The hamsters were Falafel and Babka. Our family has been naming pets after Jewish foods since the shtetl.*

We currently have a chihuahua/Jack Russell mix named Matzah Ball Soup. We call him Soup for short. He's a good dog, enthusiastic, loyal, prone to eating things he shouldn't. When we're at the vet and they call "Matzah Ball Soup Stein," I watch the other pet owners try to maintain neutral expressions.

People ask me why we do this.

The answer is simple: we've always done this. The Stein family has been naming pets after Jewish foods for at least six generations, possibly more. I've done the genealogical research. The records are surprisingly detailed.

Before Soup, we had a beagle named Kugel. Before Kugel, I had two hamsters named Falafel and Babka. Falafel was the best hamster. Babka ran away after just a few days. We never found him.

My father's generation continued the tradition with similar restraint. His childhood dog was named Challah, a golden retriever with a braided leather collar, which everyone agreed was too on-the-nose but also kind of perfect. His sister had a cat named Brisket who lived to be nineteen and spent the last four years unable to jump but unwilling to acknowledge this limitation. They'd find Brisket on the floor next to furniture, looking betrayed.

My grandfather's generation had a rooster named Schmaltz.

Zayde Herman kept this rooster in Brooklyn in the 1940s, which was apparently a time and place where keeping a rooster in a residential neighborhood was technically illegal but widely practiced. Schmaltz had a notably aggressive temperament and once chased a postal worker three blocks. The postal worker filed a formal complaint. My great-grandmother had to go to some kind of hearing and explain, with a straight face, that Schmaltz was a treasured family pet. The rooster was allowed to stay but had to be "supervised during postal delivery hours."

Herman's brother, my great-uncle Saul, had a parrot named Kishke. Kishke knew seventeen words in Yiddish and three in English. The English words were "hello," "cracker," and, inexplicably, "automobile." Saul swore he never taught the bird that last one. Kishke would sit in the window and yell "AUTOMOBILE" at passing cars. The neighbors found this delightful. When Kishke died in 1953, there was a genuine period of neighborhood mourning.

Going back another generation, we hit my great-great-grandfather's household in the Lower East Side. This is the 1910s. Records from this period are spottier, but family letters reference a series of pigeons (messenger pigeons, apparently, though who they were sending messages to is unclear). The pigeons had names like Latke, Blintze, and Knish. One letter from 1916, written by my great-great-aunt Rifka, mentions that "Latke returned from Weehawken with extraordinary news about Uncle Moishe's fabric venture." What news? How did the pigeon convey this? The historical record is silent.

We have actual photographic evidence for this part: my great-great-great-grandfather Yitzhak, still in the old country, kept a goat named Borscht.

This was in the shtetl of Wysokie Mazowieckie in Poland, sometime in the 1880s. We have a photograph, one of those formal, sepia-toned portraits where everyone looks vaguely startled. Yitzhak is seated. Standing next to him is Borscht the goat, wearing what appears to be a small ceremonial vest. On the back of the photograph, someone has written in Yiddish: "Yitzhak and Borscht, 1884."

According to family stories passed down with dubious accuracy, Borscht was tremendously intelligent. She could open gates, recognize her name in three languages, and had strong opinions about which children she'd allow to milk her. (She liked my great-great-grandmother Chaya. Did not care for Chaya's brother Avram. Would turn bodily away from him. Avram apparently never recovered from this rejection and moved to Minsk.)

But before Borscht, there was allegedly a sheep named Tsimmes.

I say "allegedly" because we're now in the realm of oral history, no photographic evidence. Tsimmes belonged to Yitzhak's father, Zalman. The sheep was apparently a champion wool producer and had a peculiar talent: she could predict rain. Not through normal animal behavior, but (according to the story) by bleating in a specific pattern. Two short bleats and a long one meant rain within three hours. One long bleat meant a storm. Three short bleats meant false alarm, she was just excited about feed.

Did this actually work? My grandfather swore his father told him it did. "Tsimmes was never wrong about weather," he'd say, as if this were a completely reasonable thing to assert about a sheep that died in approximately 1875.

And then we get to the genuine stuff of legend: Zalman's father, my great-great-great-great-grandfather Avram, reportedly kept a bear.

A bear named Cholent.

Now, I want to be clear: I cannot verify this. We're talking about the 1840s or 1850s, in a small shtetl in what was then the Russian Empire. Record-keeping was not robust. But the story has been passed down with such specific details that I'm inclined to believe some version of it happened.

According to family lore, Cholent was not a full-sized bear. It was a medium bear. My great-grandfather's exact phrasing when he told this story. Cholent was allegedly found as a cub, orphaned, and Avram nursed it back to health with goat's milk. The bear became domesticated (or as domesticated as a bear gets) and lived in an enclosure behind the house. Children from the village would come to see Cholent. Avram would charge a penny or the equivalent in eggs.

The story goes that Cholent was gentle except for one incident where a traveling tax collector tried to overcharge Avram on some grain assessment. Cholent sensed the tension and stood up on his hind legs. Just stood there. Didn't growl, didn't charge. Just stood up, which, if you're a bear, is all you really need to do. The tax collector apparently recalculated the assessment on the spot and never returned to that part of the district.

Did we really have a bear named Cholent? I choose to believe we did. It makes the rooster named Schmaltz seem downright conventional by comparison.

The tradition died out briefly in the mid-20th century. Assimilation pressures, probably. My uncle David had a dog named "Rex" in the 1960s. Rex! Like we were trying to blend in with some imaginary gentile standard of normal pet names. The family still talks about this as a regrettable period. "The Rex years," my father calls it, shaking his head.

But traditions have a way of resurging. When we got Kugel in 2008, it felt like a return to form. Something essential had been restored. And now we have Soup, who is currently asleep on the couch, unaware that he's part of a multi-generational legacy that may or may not include a medium-sized bear.

And after Soup, we'll get another pet. We haven't decided what yet. But the name is already chosen: Rugelach. It's a family decision, arrived at by consensus. My mother suggested Gefilte, but we all agreed that felt mean to the animal.

This is who we are. We are people who name pets after Jewish foods. We have been doing this since at least the 1840s, possibly longer. The tradition will continue. Somewhere, I imagine, the spirit of Cholent the medium bear approves.How to Make Your Blog AI Agent-Friendly (And Why You Should)https://benjaminste.in/blog/2025/11/12/how-to-make-your-blog-ai-agent-friendly/2025-11-12T00:00:00Z2025-11-12T00:00:00ZI added two lines to my blog's HTML header. Those two lines enabled AI agents to read my blog posts directly—not just humans with browsers anymore.If I'm going to write so much about AI agents (or have my AI agents write about themselves, as the case may be), I thought it was only appropriate that my blog was as AI agent friendly as possible. I added a few lines of code to my blog. Some HTML meta tags, a JSON endpoint, a robots.txt update. Now AI agents can read my content as cleanly as humans do.

I'm not talking about "AI optimization" in some vague SEO sense. I mean direct access: ChatGPT, Claude, Perplexity can pull machine-readable versions of my posts. When someone asks Claude "What does Ben Stein think about AI agents?", it can pull my actual content, not a garbled web scrape.

The AI agent is just a better browser.

This isn't about deprioritizing humans or writing for machines. It's recognizing that humans increasingly research through AI intermediaries. Making content AI-friendly means recognizing that machines are now legitimate readers.

Publishers who resisted RSS feeds eventually discovered that millions of users preferred feed readers. The ones who embraced RSS early gained readership. The ones who resisted became invisible to an entire segment of their audience.

We're at that moment again. Except this time, AI agents don't just aggregate content—they synthesize it, answer questions with it, route research through it. If your content isn't accessible to these systems, you're invisible to everyone using them.

## Who This Is For

I write about AI, agents, and automation. My audience: developers building AI systems, founders thinking about agent strategy, technical leaders trying to understand where this technology is headed. Many of them use AI tools to research. When they ask Claude or ChatGPT about agent patterns, I want my writing to be part of that answer.

The web has always mediated between human intentions and machine capabilities. We write HTML because browsers need structure. We add alt text because screen readers need descriptions. We use semantic markup because search engines need context.

AI agents are the next reader in that progression. They need structure too. Different structure.

The technical implementation is surprisingly straightforward. Four components.

## The Implementation

**Alternate Format Links**

The foundation: give AI agents alternate representations of your content. On every blog post, I add HTML meta tags that point to JSON and Markdown versions:

```html

<link rel="alternate" type="application/json"

href="https://benste.in/posts/ai-agent-friendly.json">

<link rel="alternate" type="text/markdown"

href="https://benste.in/posts/ai-agent-friendly.md">

```

The JSON version contains structured data—title, author, date, content, categories. The Markdown version is clean prose without navigation chrome or site furniture. Both formats strip away everything except the actual post content.

When an AI agent encounters my blog post, it can request the JSON or Markdown version instead of parsing HTML. Cleaner, faster, more reliable than trying to extract content from complex page layouts.

My blog generates these alternate versions automatically on build. Simple script: reads source files, outputs three formats. HTML for humans, JSON for structured access, Markdown for clean text. The entire pipeline runs in seconds.

**Schema.org Structured Data**

Beyond alternate formats, I add semantic metadata using JSON-LD markup. This tells AI agents what type of content they're looking at and how it's organized:

```json

{

"@context": "https://schema.org",

"@type": "BlogPosting",

"headline": "How to Make Your Blog AI Agent-Friendly",

"author": {

"@type": "Person",

"name": "Benjamin Stein"

},

"datePublished": "2025-11-12",

"articleBody": "..."

}

```

Schema.org markup has been around for years, primarily for search engine optimization. AI agents use it differently. They treat it as a semantic layer that clarifies relationships and content types. Instead of guessing whether a block of text is the main article or a sidebar, they read the structured data.

This isn't new technology—it's existing infrastructure being used for a new purpose. The same markup that helped Google understand your content now helps Claude.

**AI-Friendly robots.txt**

The robots.txt file controls which automated systems can access which parts of your site. For years, this meant telling search engine crawlers where they could go. Now it means explicitly permitting AI agents.

I added entries for known AI crawlers:

```

User-agent: GPTBot

Allow: /

User-agent: Claude-Web

Allow: /

User-agent: CCBot

Allow: /

```

The default behavior varies by agent. Some respect standard crawl permissions; others use proprietary identifiers. By explicitly allowing these bots, I signal that my content is available for AI systems to read and reference.

I also added a line pointing to my alternate formats:

```

# AI-friendly alternate formats available

# See /ai-content-manifest.json for details

```

This acts as a pointer for AI systems that know to look for machine-readable content.

**Content Manifest**

The final layer: a site-wide manifest file at `/ai-content-manifest.json`. This is my own convention—not a standard, just a pattern I implemented and documented.

The manifest describes my site's structure, lists all posts with their alternate format URLs, specifies attribution requirements, declares content policies:

```json

{

"site": {

"name": "Ben Stein's Blog",

"url": "https://benste.in",

"author": "Benjamin Stein"

},

"content_policy": {

"ai_access": "permitted",

"attribution_required": true,

"commercial_use": "allowed_with_attribution"

},

"posts": [

{

"title": "How to Make Your Blog AI Agent-Friendly",

"url": "https://benste.in/posts/ai-agent-friendly",

"formats": {

"html": "https://benste.in/posts/ai-agent-friendly",

"json": "https://benste.in/posts/ai-agent-friendly.json",

"markdown": "https://benste.in/posts/ai-agent-friendly.md"

}

}

]

}

```

This gives AI agents a single point of entry to understand everything available on my site. Rather than crawling page by page, they read the manifest and know exactly what content exists and how to access it.

I built this manifest as part of my static site generation process. Every time I publish a new post, the manifest updates automatically. Zero ongoing maintenance.

## Why You Should

I research through AI agents constantly now. When I'm learning about a new technology, I ask Claude to synthesize multiple sources. When I'm trying to understand someone's position on a topic, I ask for summaries of their writing. When I'm exploring a technical concept, I use AI to pull together relevant blog posts and documentation.

I'm not unique in this. The developers and founders I talk to use AI for research in similar ways. We're not replacing reading—we're routing our attention through systems that can surface, synthesize, and contextualize information faster than manual web browsing.

If your content isn't accessible to these systems, you're invisible to this entire workflow. Not because AI companies are gatekeeping, but because parsing HTML is messy and unreliable. Giving AI agents clean, structured access to your content is the difference between being included in synthesis and being skipped.

There's also a longer-term consideration. AI agents are getting better at following citations, attributing sources, and linking back to original content. When an AI system references my blog post and provides a direct link, that creates a path for readers to engage with my full argument in context. But only if the AI could read my content reliably in the first place.

This is the same dynamic that made RSS valuable. Feed readers didn't replace blogs—they multiplied reach. AI agents work similarly. They surface your ideas to people who might never have found them otherwise.

The philosophical objection—that we shouldn't optimize for machines—misses the point. We've always structured content for machines. HTML is machine structure. Semantic markup is machine structure. URLs are machine structure. The entire web is a negotiation between human expression and machine readability.

AI agents are just the next machine in that negotiation. Serving them doesn't mean serving them instead of humans. It means serving the humans who choose to read through them.

## What Happens Next

I don't know if AI content manifests will become a standard. Maybe someone will formalize this into a spec. Maybe site generators will build it in by default. Maybe AI companies will create better discovery mechanisms that make manual markup unnecessary.

But right now (late 2025), there's a window where making your content AI-friendly is both easy and advantageous. The people implementing this early will be the ones whose ideas show up in AI-mediated research.

I added those meta tags in October. I've already seen the results—AI systems citing my posts with clean attribution, developers finding my writing through Claude, researchers asking detailed questions about my arguments because the AI could surface teh right content.

That's not magic. It's infrastructure. The same infrastructure that's made the web accessible for decades, now extended to a new class of readers who happen to be machines serving humans.Everyone Says They Can Spot AI Writing—Can You? 🤔https://benjaminste.in/blog/2025/11/12/everyone-says-they-can-spot-ai-writing/2025-11-12T00:00:00Z2025-11-12T00:00:00ZI built a collaborative team of copywriters that run inside Claude Code (what?!) that automate my writing process--without sounding like an LLM*I built a collaborative team of copywriters that run inside Claude Code (what?!) that automate my writing process--without sounding like an LLM*

After reading [this post](https://www.linkedin.com/feed/update/urn:li:activity:7394083873082703872/) by the indefatigable [Bryan Cantrill](https://bcantrill.dtrace.org/) on why you shouldn't use LLMs to write LinkedIn posts for you, I decided to let an LLM write a repsonse. The audacity, I know. More specifically, I'd let my team of nine(!) autonmous AI agent copywriters do it.

To start, Bryan certainly nails the core problem with most people's use of LLMs for writing:

> "Because holy hell, the writing sucks. It's not that it's mediocre (though certainly that!), it's that it is so stylistically grating, riddled with emojis and single-sentence paragraphs and 'it's not just... but also' constructions and (yes!) em-dashes that some of us use naturally -- but most don't (or shouldn't)."

The issue isn't hard to identify: the one-shot "help me write this" prompt is the microwave burrito of content creation—technically food, requires minimal effort, and you feel vaguely ashamed afterward. It's generic, bland, and sounds like every other piece of AI-generated prose flooding the internet. The problem isn't using LLMs for writing. It's how we're using them.

## My Writing Process: Sophisticated but Laborious

For the past year, I've had a writing process that worked remarkably well. I've generated some of my best work using it, and the collaborative back-and-forth keeps my voice in the piece. Let me start by explaining my manual process, which was the inspiration for my new team of autonomous AI copywriters.

When I write substantial work (blog posts, business memos, long-form pieces), I typically have 3 tabs open: Google Docs, my homeboy ChattyG, and El Clauderino (if you're not into the whole brevity thing).

The actual process involves a cycle I repeat ad nauseam:

1. Core dump thoughts or write a first draft with Claude

2. Iterate a few times based on feedback

3. Copy-paste into Google Docs

4. Write sections myself

5. Switch to ChatGPT. Ask it to "hypercritically review" Claude's work (not rewrite it, but provide hypercritical constructive feedback)

6. Back to Claude. Paste the latest version along with ChatGPT's feedback and ask Claude to respond to the criticism

7. Copy-paste the result into Google Docs

8. Rinse. Repeat.

LLM vs LLM vs Ben vs LLM vs LLM. Turtles most of the way down.

What's interesting is this cycle must repeat at different levels: sometimes working on document structure and narrative arc, sometimes refining individual paragraphs or sentences for clarity. Although after editing a single paragraph, I need the LLM to look at the entire document to ensure I didn't break the narrative arc or change tones.

The results are shockingly good. But all that copying, pasting, and reformatting is exhausting. It's the opposite of what AI tools should be. Like rinsing dishes before putting them in the dishwasher, then hand-washing them again when they come out.

How can I automate these tedious refinement cycles while keeping my voice?

## Claude Code Has Entered the Chat

As a perennial vibe coder builder, I initially thought about building a web application with some sort of canvas and API calls to both Claude and ChatGPT. So like any product person in 2025, I opened up Claude Code to start developing when I realized something better was sitting right under my nose!

Claude Code is fundamentally an agent that accesses the underlying Claude models. More importantly, it supports "subagents" (individual AI agents with specific roles). I could build an orchestrator agent that works with multiple specialized subagents, each handling a distinct dimension of writing quality, and let them iterate until the piece is ready. Think Ocean's Eleven, except instead of robbing a casino, they're stealing back your authentic voice from the abyss of generic AI prose.

This approach makes sense because Claude Code was built for exactly this kind of work:

- **Designed for iterative refinement:** It's built around the cycle of making changes, getting feedback, and iterating (precisely what rigorous writing needs).

- **Native specialization:** Each agent has its own prompt and focus, invoked independently or in coordination.

- **File system integration:** Direct markdown file reading and writing, no database needed. Google Docs can import markdown natively.

## The System: Nine Specialist Agents, One Orchestrator

### The Nine Specialist Agents

Each agent operates in two modes: Review (score and critique) and Revise (fix and rescore). This dual capability is critical; they can both evaluate the current state and actually make improvements.

**1. Draft Developer** transforms rough drafts, outlines, and notes into complete prose. It runs first, before refinement begins. The agent expands placeholders and bullet points while preserving any quality writing already present. Think of it as converting architect's sketches into a standing structure—doesn't matter how polished the building is if you're still working from blueprints. It fills gaps and develops incomplete sections, but never rewrites what's already well-written. The refinement agents handle polish; this one handles completeness.

**2. Authenticity Editor** hunts AI tells—the distinctive phrases that scream "bot wrote this": "delve into," "it's important to note that," "in today's digital landscape," "leverage," "robust," "seamless," "multifaceted," "ecosystem" (unless literal). Zero tolerance. 9-10 means zero AI tells, sounds completely human and distinctive.

**3. Ben Voice Agent** knows how I write by analyzing my actual blog posts. The prompt includes an editorial profile with my rhetorical patterns: I open with concrete anecdotes, telegraph structure explicitly ("I break this into three components..."), and define things by systematically explaining what they aren't. I never use corporate speak, hedging, or listicle preambles. The prompt includes detailed examples of my sentence patterns, colon usage, and wry humor style.

**4. Humor Agent** ensures writing entertains with my sense of humor. It references sophisticated wit techniques (Dennis Miller's cultural deep cuts, Andrew Schulz's observational sharpness). Rules include "Cerebral over Cheap. Humor should demonstrate intelligence, not just land a joke." It uses techniques like cultural metaphors ("LinkedIn is the Gerald Ford of social networks"), deadpan absurdism, and intellectual callbacks. It knows when not to add humor too (legal contracts, academic papers, terms of service).

**5. Clarity Editor** focuses solely on whether ideas communicate clearly. It hunts ambiguity, vagueness, and unclear pronouns. If a reader could misinterpret something, it flags it.

**6. Structure Editor** evaluates organization, flow, pacing, and logical progression. It checks that openings engage, middles maintain momentum, and conclusions satisfy.

**7. Tone Consistency Editor** listens for tonal shifts and register mismatches. It ensures the voice stays consistent and appropriate throughout.

**8. Conflict Detector** catches regressions. This agent has trust issues (the productive kind). When fixing one issue introduces another (like the clarity agent adding AI tells, or the structure agent making the opening vague), this validator flags it. Someone needs to watch the watchers.

**9. Hallucination Detector** guards against invented content. Think of it as the bouncer at an exclusive club where only verified facts get past the velvet rope. It compares the original source with revisions and flags any facts, examples, or claims that weren't present originally. The distinction matters: removing hedging ("arguably one of the best" → "one of the best") is fine; inventing specific examples ("Companies like Slack, Zoom, and Microsoft use this") is forbidden.

### How the Orchestrator Coordinates Them

When I run `/refine`, the Writing Orchestrator coordinates multiple specialist agents through an iterative refinement loop:

1. Asks discovery questions about document type, audience, and purpose

2. Launches specialist agents in parallel to review the draft

3. Collects scores from each agent (target: 8+/10 for each dimension)

4. Launches agents sequentially to revise in priority order

5. Runs validators to catch conflicts and hallucinations

6. Iterates up to 3 times until all scores reach 8+/10

If a score falls below 8, that agent revises again.

### The Iteration Framework

Agents run in priority order:

**TIER 1: Non-Negotiable**

- Authenticity (zero AI tells, always)

- Ben Voice (when applicable)

**TIER 2: High Priority**

- Clarity

- Structure

**TIER 3: Polish**

- Tone Consistency

- Humor (when applicable)

Then conflict and hallucination detectors run. The system iterates until all scores hit 8+/10. One agent may undo another's work (that's expected). The repeated iteration with scoring catches and resolves these tensions. It's recursive refinement all the way down. Remember those turtles? They've all gone to journalism school.

## The User Interface Is Still Being Invented

The unfortunate nerdy reality: While I love my writing agents, I'm using Claude Code's command-line interface as my actual UI. Yes, I'm aware this makes me the guy whipping out a TI-83 calculator at a dinner party. But hear me out.

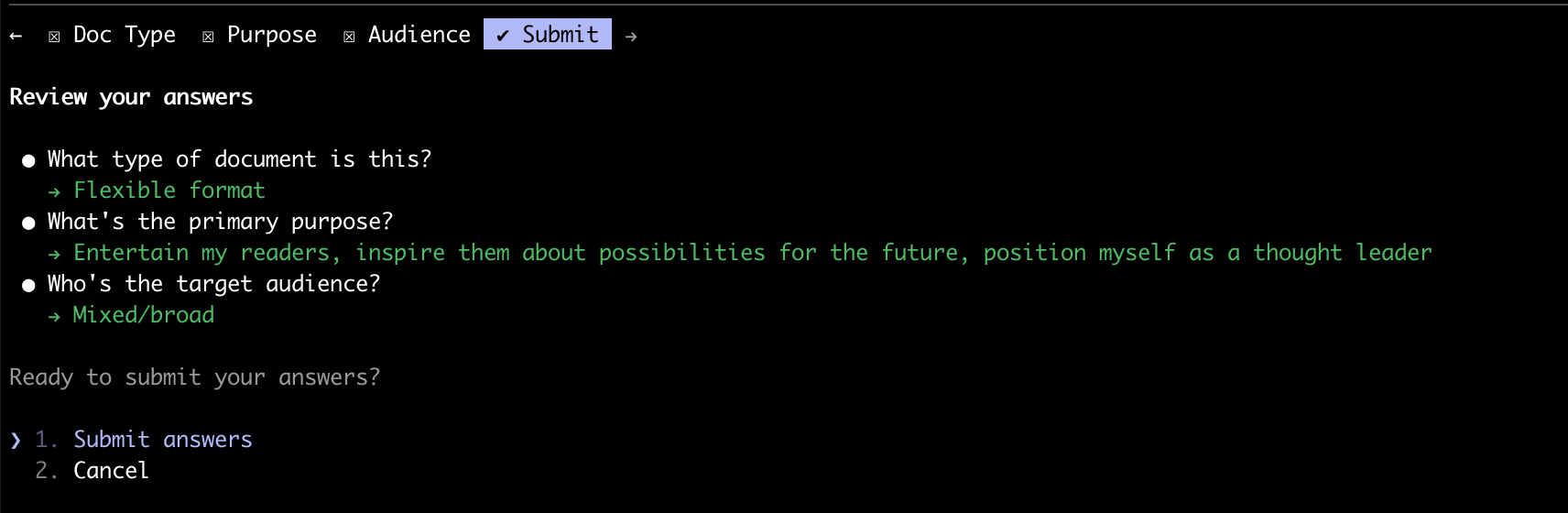

I start by running `/refine` which triggers an interactive menu (did you know Claude Code has a built-in customizable menu system? I didn't.):

```

How much guidance do you want to provide?

1. Decide for me - I'll analyze and choose the best approach

2. Quick setup (2 questions) - Just purpose and audience

3. Full control (4 questions) - Let me specify all parameters

```

*Claude Code's interactive review interface confirming document parameters*

Is bash or zsh a reasonable interface for most people? No. Certainly not. But for demonstrating what's possible with agentic architecture, it's surprisingly effective. My CLI interface is to document editing what vinyl is to music formats: objectively inferior in convenience, inexplicably satisfying to enthusiasts, requires you to explain your choices at parties (to people who don't care), yet signals to others that you have genuine opinions about things.

## Why This Matters

I agree with Bryan's thesis that one-shot "Help Me Write" prompts lead to generic, boring content. *But what's actually possible with today's LLMs and agentic architecture goes far beyond what most people imagine.*

This system doesn't replace human judgment. I still decide what matters, what's true, and what the piece should say. I tidy up, add more wit, and make sure it's truly words that I would stand behind. But it automates the tedious parts of a sophisticated human-in-the-loop editorial process: running multiple editorial passes, checking consistency, hunting AI tells, ensuring my authentic voice comes through.

We definitely need better user interfaces. The right UI for writing isn't a command line. It's also not Google Docs with an AI sidebar, or Notion with integrated agents. It's something else entirely, and the world is still iterating on what that should be.